We open-sourced our debiasing module a few weeks ago.

In this post, we give an introduction to bias in computer vision models, we discuss our new research on debiasing models, and we show how you can debias your own model with our open-source code.

The use of facial recognition software is becoming more and more common around the world. Facial recognition algorithms are currently being used by the US government to match application photos from people applying for visas and immigration benefits, to match mugshots, and to match photos as people cross the border into the USA. However, a recent study showed that most of these algorithms exhibit bias based on race and gender. For example, some of the algorithms were 10 or 100 times more likely to have false positives for Asian or Black people, compared to white people. This means that a Black or Asian person is more likely to be arrested for a crime they didn’t commit. In fact, this has already happened in Michigan. A Black man was falsely arrested and detained for 30 hours because a machine learning algorithm picked his identity based on security camera footage of a different Black man.

This bias is also prevalent in other forms of computer vision research. For instance, researchers at Duke University recently released PULSE, an algorithm which upscales blurry/pixelated images using machine learning. It works by using StyleGAN to generate a brand-new face whose downscaled image is similar to the input. However, uploading a pixelated image of Obama results in a white man. In fact, many images of POC result in images of white people (Source: Twitter @Chicken3gg).

Some deep learning experts have speculated that this bias is due to the training dataset – most of the images used to train the machine learning model were white people. However, the bias is due to other more subtle reasons as well. See this NeurIPS 2019 talk by Emily Denton and this CVPR 2020 talk by Timnit Gebru for more information on bias that can arise in computer vision research.

Debiasing neural networks

There are many algorithms for debiasing computer vision models, but the majority of methods require retraining the entire model from scratch. This is not always practical, because it is becoming increasingly common for computer vision models to be fine-tuned from very large pretrained models on large datasets such as ImageNet, which can take hundreds of GPU hours to retrain. Instead, we have developed post-hoc debiasing techniques, which take as input a pretrained model and a validation dataset, and then debias the model through fine-tuning.

We recently released three new post-hoc algorithms for debiasing neural networks, as explained in this earlier blog post. The first two methods are zeroth order optimization techniques which work well for tabular data (such as Census data or Criminal record data), but are not powerful enough to effectively debias structured data like sets of images. In order to debias image datasets, we focus on our third algorithm – adversarial fine-tuning. This is a powerful algorithm that uses a technique that is similar to GANs to debias computer vision models. Our algorithm trains a new neural network, a discriminator, to predict the bias of the original neural network. This effectively gives us a proxy for the bias loss that is differentiable, enabling the use of first-order optimization techniques such as gradient descent to fine-tune the original neural network. Adversarial learning has recently been proposed as an in-processing method for debiasing, but it has never been used as a post-hoc debiasing method until our work.

Results on the CelebA dataset

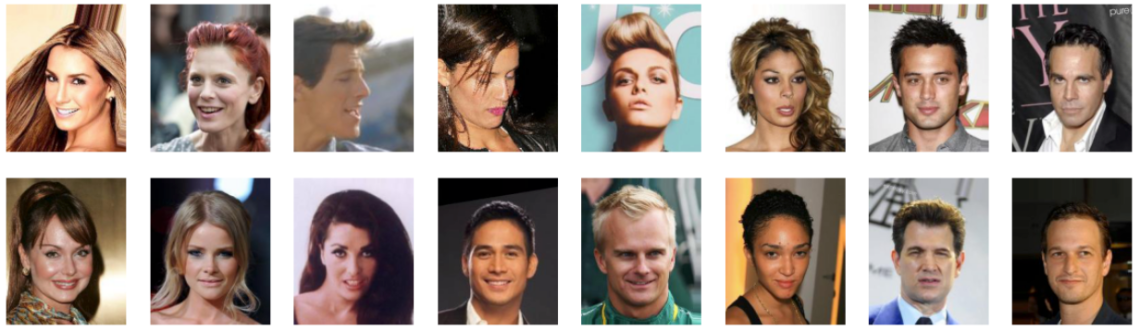

The CelebA dataset, shown above, is a popular image dataset used in computer science research. This dataset consists of 200,000 images of celebrity head-shots, along with attributes such as “smiling”, “young”, gender, and race. A number of groups have trained neural networks to predict these labels simply by looking at the photo. Disclaimer: machine learning researchers/practitioners should strive to get rid of datasets that give binary labels to attributes such as gender, attractiveness, and race, but our techniques will help to debias models that have already been trained on this type of dataset.

We found the dataset to contain bias for a number of reasons. For example, the dataset consists of 3.3% Black people and 73.8% white people. Since there are 22x more white people than Black people, models trained on this dataset often have biased predictions. We show that our algorithm is able to debias these models with respect to predicting attributes such as “smiling” and “young”. The results below show the results from the model before any debiasing algorithms were applied and after we operated on the model using one of our algorithms.

In our experiments, we used average odds difference (AOD) to measure bias, which computes the difference in the true positive rates and the false positive rates for the protected and unprotected classes. We see that the predictions are often improved for the protected class and unaffected for the unprotected class.

In both experiments we tried, the adversarial model improves *both* accuracy and bias, compared to the original model, and compared to another of our techniques, random perturbation. See our paper for the full results compared to benchmark debiasing algorithms.

To debias your own machine learning models, follow the instructions in the readme of our github repository.

- Improving Open-Source LLMs – Datasets, Merging and Stacking - January 11, 2024

- Open Source LLMs, Fine-Tunes and RAG Based Vector Store APIs - October 19, 2023

- Closing the Gap to Closed Source LLMs – 70B Giraffe 32k - September 25, 2023